The Republican majority of the US House Judiciary Committee has just published a voluminous “interim staff report” (February 3rd), presenting documentary evidence of far-reaching digital censorship conducted by online platforms under the supervision of the European Commission. The report, entitled “The Foreign Censorship Threat, Part II: Europe’s Decade-Long Campaign to Censor the Global Internet…”, makes for sobering reading, corroborating the worst fears of critics of the Digital Services Act (DSA). It is worth underlining that the censorship regime enabled by DSA should be of concern not only to Europeans, but also to non-Europeans, since their practical effect, given the technical challenges and economic costs of instituting region-specific moderation regimes, is to restrict speech across the globe and not only in Europe.

The DSA Paved the Way for Arbitrary Censorship

In this blog, I warned on September 5th 2023, shortly after the Digital Services Act (DSA) was applied to “very large online platforms” (VLOPs), that “the net effect of the act would be to apply an almost irresistible pressure on social media platforms to play the “counter-disinformation” game in a way that would pass muster with the Commission’s auditors, and thus avoid getting hit with hefty fines.” And so, according to this report, it has come to pass.

We do not need to read the House Judiciary Committee’s interim report to understand that the wording of the Digital Services Act creates enormous discretionary power on the part of the European Commission in overseeing platforms’ content moderation policies. For the Act places online platforms under onerous “due diligence obligations” to “mitigate” vaguely defined “systemic risks,” including risks related to “disinformation” and impacts on “civic discourse” and electoral processes.

The Act in itself leaves considerable room for interpretation regarding how the European Commission and its auditors will assess “systemic risks” like disinformation, threats to “civic discourse,” and hate speech, and how they will evaluate the adequacy of service providers’ mitigation efforts. This ambiguity gives enforcers of the Act broad discretion to interpret it as they see fit. The Commission has investigative and enforcement powers under the DSA, including the ability to impose fines of up to 6% of a platform’s global annual turnover for non-compliance.

The 160-page report makes a compelling case that the Digital Services Act is effectively the culmination of a decade-long campaign to give the European Commission ever-greater power over the content moderation policies of online platforms. The many twists and turns of this campaign, which includes earlier “voluntary” Codes of Conduct coordinated by EU institutions, are outlined in the report.

Here, I propose to focus exclusively on what the report presents as some of the bitter fruits of the Digital Services Act, namely the censorship actions conducted under its oversight mechanisms. The report focuses overwhelmingly on interactions between the EU Commission and TikTok, and assuming the supporting documents can be authenticated, it gives us a disturbing glimpse of a deeply entrenched censorship regime that is completely opaque to the average citizen. This is just the tip of the iceberg. There is no telling what else might be uncovered if an investigator got access to similar evidence on other platforms such as Meta, YouTube, and LinkedIn.

How DSA Oversight Works in Practice

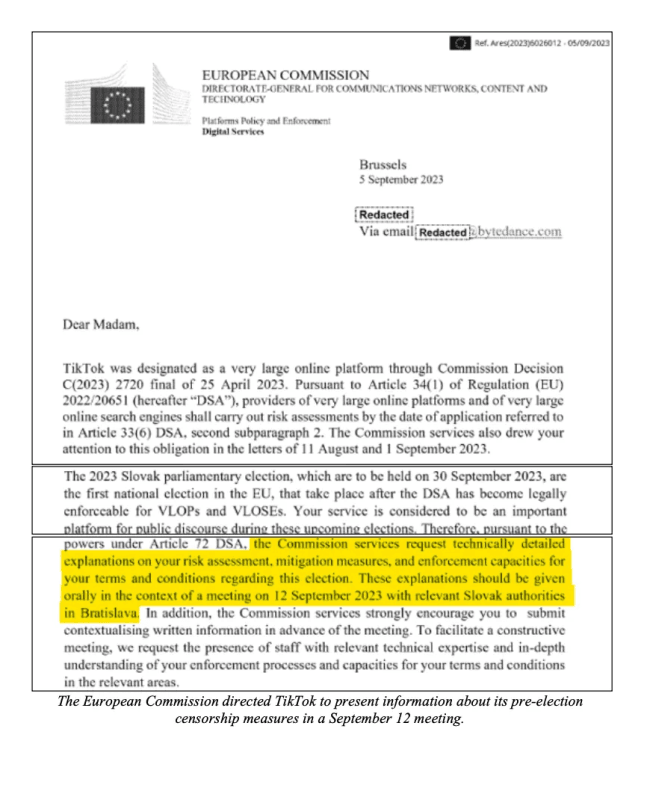

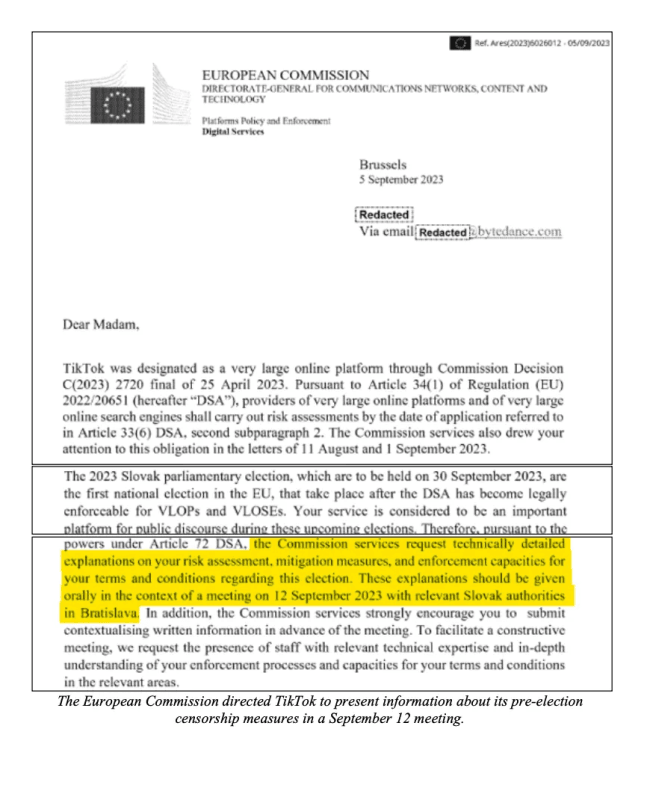

The mechanisms of control by EU officials over platform moderation policies, as outlined in the report, share a common pattern: the European Commission itself, or national regulators designated under the DSA (known as “Digital Services Coordinators” in each Member State), query platforms about their “risk mitigation” measures concerning a particular issue (e.g. vaccines, electoral disinformation, hate speech, or the war in Ukraine), either in written communications or in person, and request documentation detailing steps taken or about to be taken. Platforms then submit reports describing their compliance efforts and plans to enhance their risk mitigation strategies, after which the Commission may provide feedback or request additional measures. The House Judiciary Committee report plausibly characterizes many of these exchanges as exerting pressure on platforms to expand moderation efforts in order to avoid potential sanctions.

2024 Election Guidelines

But it is not just a generic expansion of the volume of moderation that is at stake in this report: it is also one-sided influence over the political and ideological tenor of the moderation rules. After all, the Commission is keeping a “score card” on Big Tech’s moderation policies, relying in part on its own army of “trusted flaggers,” often drawn from leftist NGOs, and is not ideologically or political neutral itself. So it should come as no surprise that the outcome of such a process is the restriction of content that is highly critical of the EU Commission’s progressive-leftist/technocratic politics and ideological commitments.

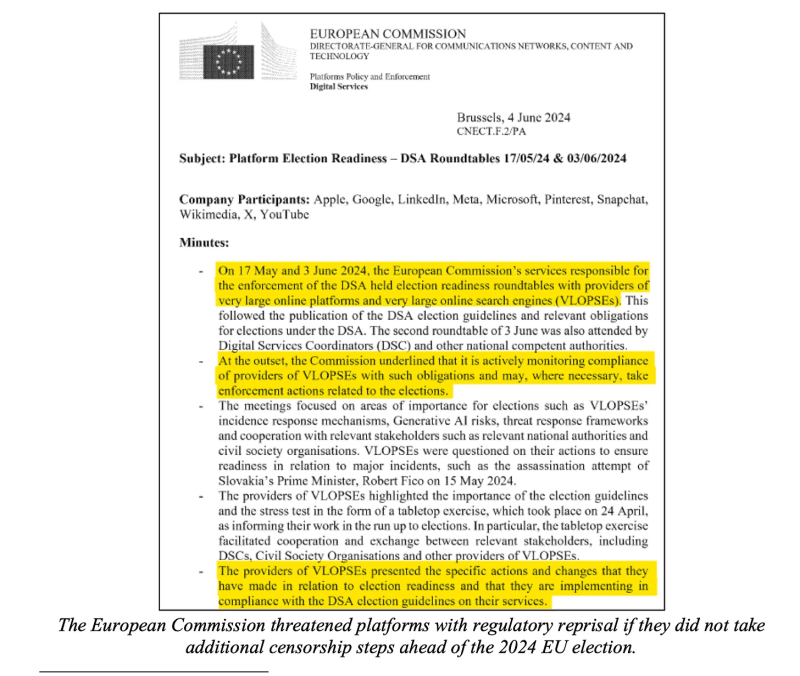

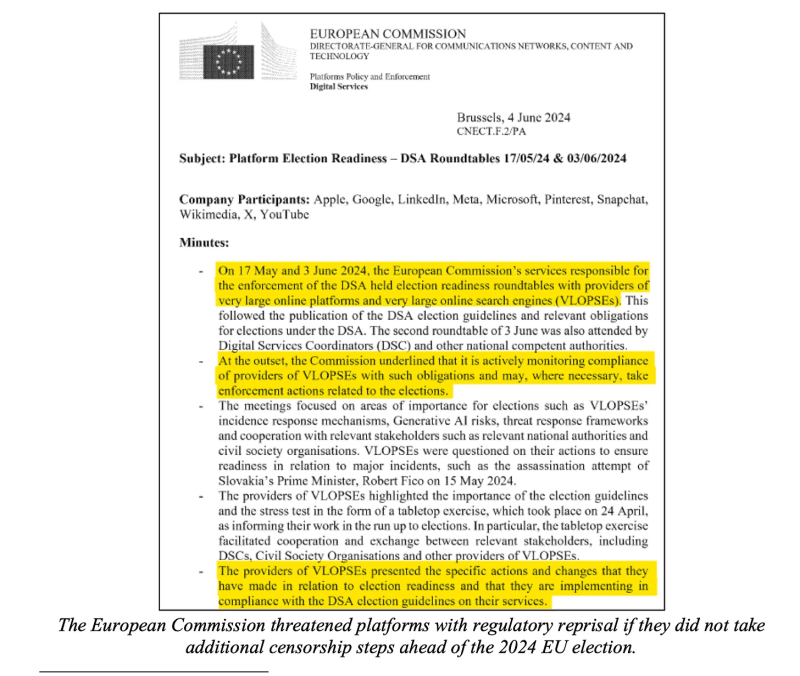

A case in point is the Election Guidelines issued by the European Commission in April 2024, prior to the European Parliament Elections, urging platforms to “update their risk mitigation measures, reduce the prominence of disinformation (including “gendered disinformation”), cooperate with fact-checkers, and develop measures intended to build resilience against “disinformation” narratives. The Commission invited Apple, Google, LinkedIn, Meta, Microsoft, Pinterest, Snapchat, Wikimedia, X, and YouTube to an “election readiness roundtable” and reminded them that “it is actively monitoring compliance” with election obligations under DSA, and would “take enforcement actions related to the elections.”

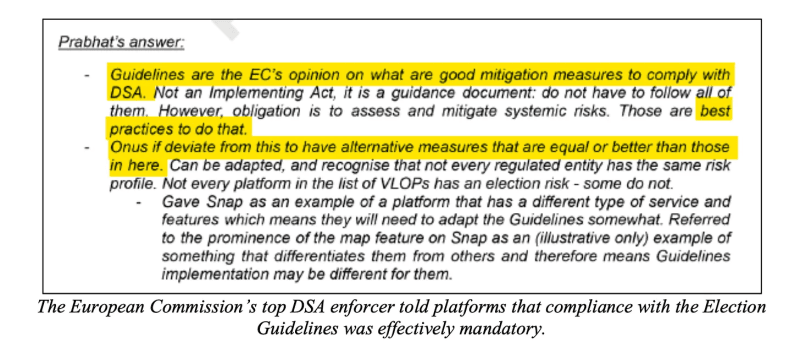

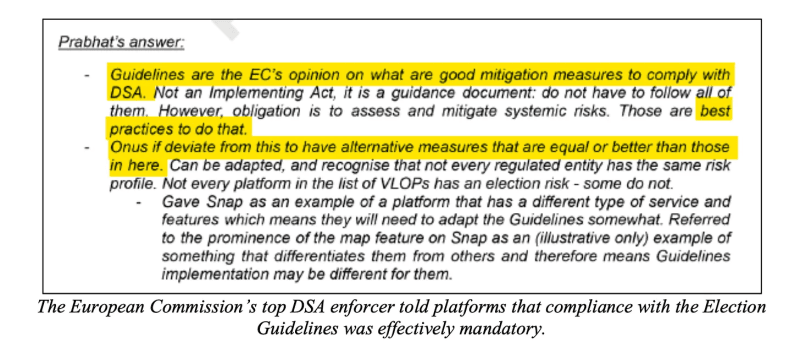

The Election Guidelines were not part of the Digital Services Act, nor were they binding law. Indeed, they were publicly framed as guidance and recommendations rather than legally binding obligations. However, the House Judiciary interim report alleges that “behind closed doors,” at a Meta roundtable on DSA Election Guidelines held on March 1st, 2024, Prabhat Agarwal, identified in the report as a senior official involved in DSA enforcement, described the Guidelines as a “floor” for DSA compliance, stating that if platforms deviated from them, they would need to “have alternative measures that are equal or better.” Strange as such a statement might seem, it is consistent with the DSA’s notion of a “due diligence obligation” on the part of large digital platforms to take reasonable measures to mitigate platform risks. If the enforcer of a vague obligation offers a foolproof way to satisfy it — even if it is presented as a “guideline” — the “guideline” becomes an attractive way to avoid being sanctioned.

Evidence of Platform Compliance: The Case of TikTok

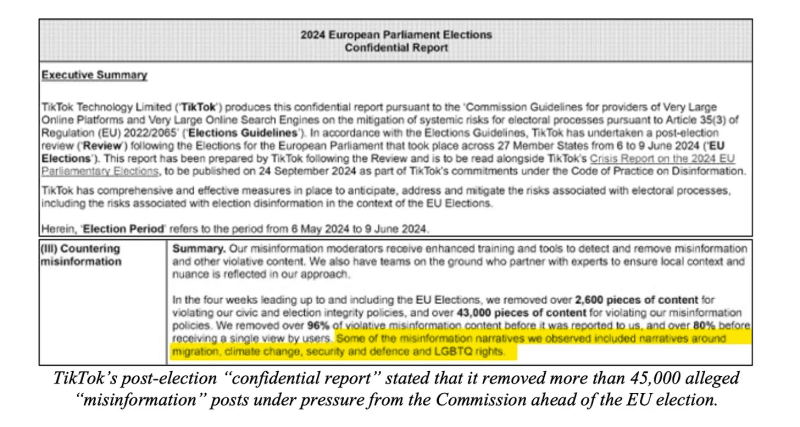

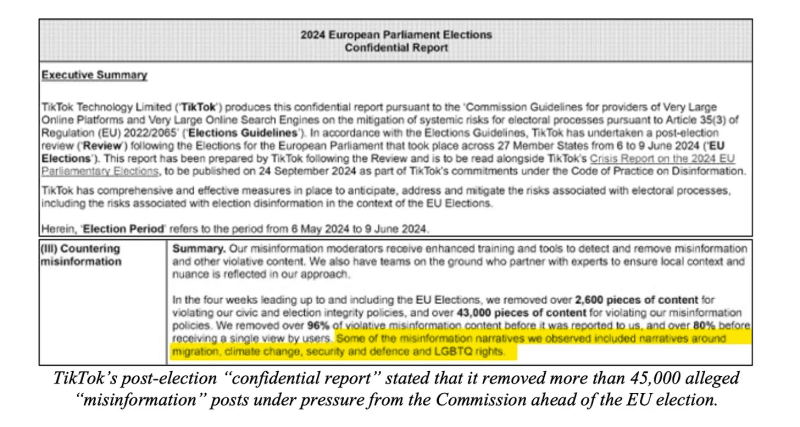

Did the platforms comply with the Commission’s 2024 election guidelines? Based on internal platform communications and exchanges between platforms and EU officials reproduced or summarized in the report, it appears that at least some platforms adjusted their policies in response. In particular, the committee report states that TikTok informed the European Commission that it had removed or restricted over 45,000 pieces of content identified as “misinformation,” including political speech on topics such as migration, climate change, security and defence, and LGBTQ rights, ahead of the 2024 EU elections.

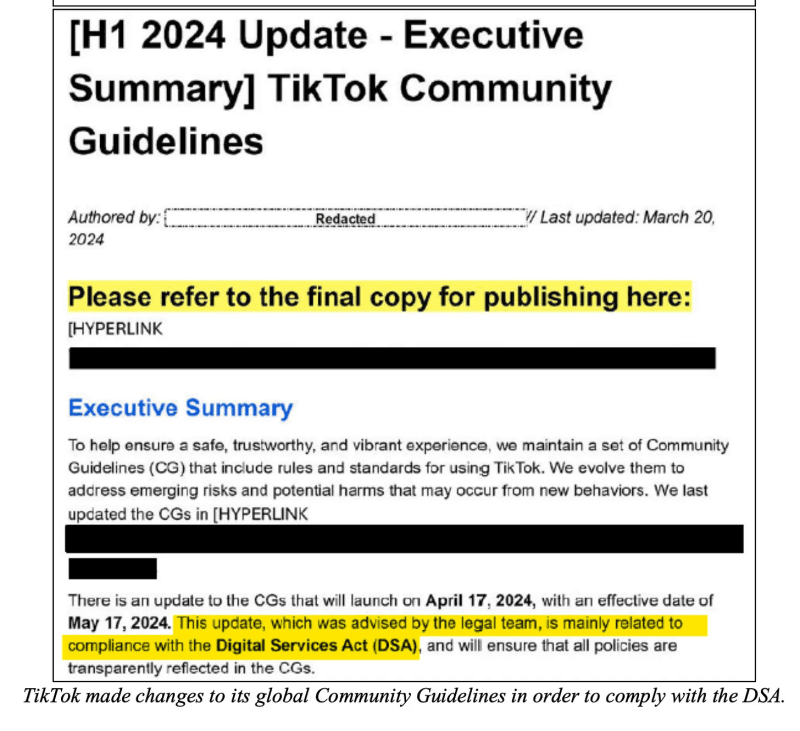

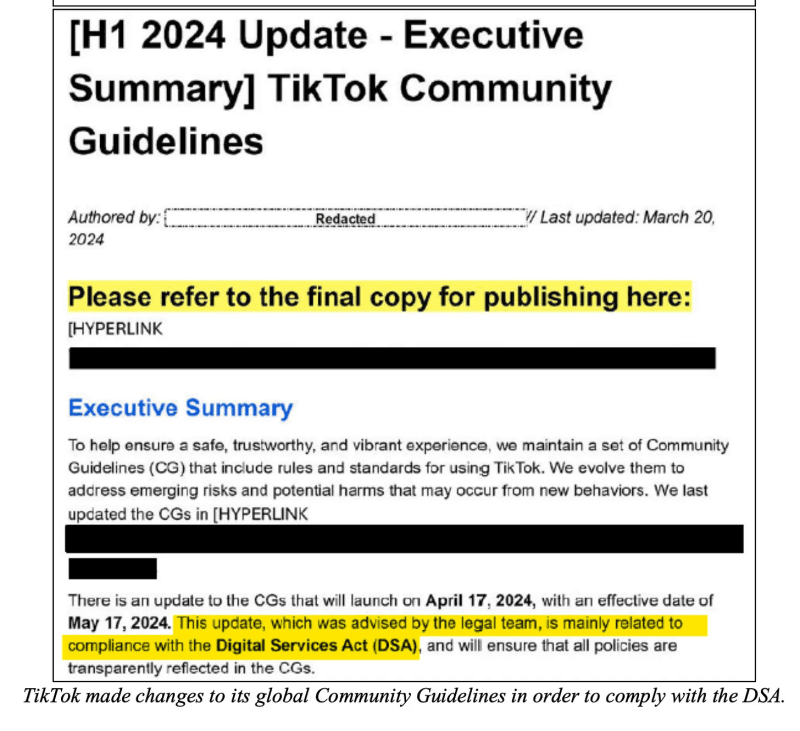

A March 20, 2024 TikTok Community Guidelines update executive summary cited in the report mentions “updates” to TikTok’s community guidelines, “mainly related to compliance with the Digital Services Act,” included policies addressing “marginalizing speech and behavior,” “misinformation that undermines trust in the integrity of the democratic process,” and “misrepresenting authoritative information, such as scientific data.” The main point of these changes, on TikTok’s own admission, was to comply with DSA. They are not policy changes determined by independent criteria.

Now, it is pretty obvious if you think through the new TikTok policies launched in 2024 to ensure DSA compliance that they can mean essentially whatever you want them to mean. For example, political discourse is inherently conflict-ridden, so almost any hostile remark could be perceived as “marginalizing speech and behavior.” Similarly, if someone makes a good faith critique of the “integrity of the democratic process,” are they “undermining trust” in it? And what of the “misrepresentation” of “authoritative information?” Science can only function if so-called “authoritative” scientific claims are not artificially shielded from hard-hitting criticism.

Subtle but Real Regulatory Pressure

It is true that the specific contours of TikTok’s censorship regime were not directly imposed by the European Commission. The Commission is not generally in the business of ordering specific posts to be removed. However, they were clearly drafted under pressure from the DSA regulatory regime and its enforcers. TikTok, in developing measures to “mitigate risk” with a view to DSA compliance, had to somehow anticipate the views of regulators regarding vaguely defined risks like “hate speech,” “disinformation,” and damage to “civic discourse.”

Now, you might wonder, if these risk categories are so vaguely defined, then how could TikTok manage to tailor its policies to pass muster with EU regulators? The answer is not complicated. When there are hundreds of millions of dollars at stake (sanctions of up to 6% of a platform’s annual global turnover), a smart and well resourced company would make it its business to “read between the lines” and figure out the politics and ideological proclivities of EU regulators. They could make an educated guess as to what sorts of censorship was music to the ears of the Commission.

For example, the Commission has consistently sided with the vaccine industry and with official health bodies like the WHO. The Commission represents a broadly leftist-progressive perspective on gender, hate speech, and environmental issues. Similarly, it is obvious that the Commission is interested in suppressing pro-Russian war propaganda. So even if the DSA does not include an exhaustive list of arguments to be targeted by censors, the history of the Commission’s legislative initiatives and past statements make very clear what sorts of things they consider to be “systemic risks.” These intuitions are undoubtedly finetuned in the public and private meetings with EU officials to which platform executives are routinely summoned.

Flagging of “Problematic” Slovakian TikTok Accounts

If the House report is accurate, then it would appear that EU officials are not shy about wading in directly and telling a platform to look into a specific account or set of accounts considered “problematic.” The report states that in September 2023, four days ahead of the Slovak parliamentary election, the Commission sent TikTok a spreadsheet with lists of “problematic accounts of Slovak TikTok,” to be looked into. The spreadsheet is reported to have contained at least 63 accounts, with follower counts ranging from 1,000 to 120,000. According to the House judiciary report,

TikTok’s post-election summary report noted that it banned 19 of these accounts in direct response to the Commission’s request—five of them for “spreading hate.” Sixteen accounts flagged by the European Commission had “none [sic] or very low violations,” including “satirical accounts focused on politics.” Other accounts were placed on TikTok’s “watchlist.”

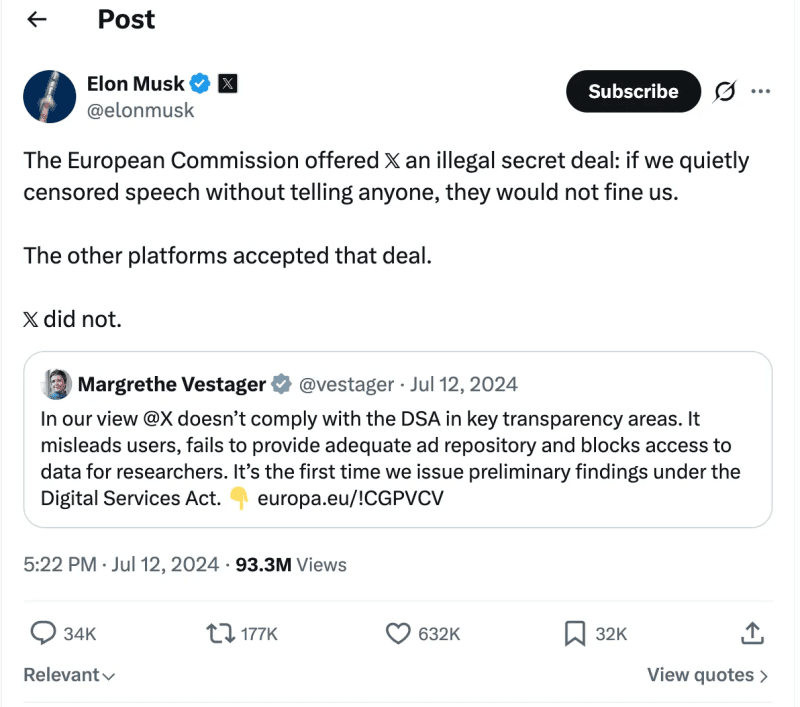

Standoff Between EU Commission and X

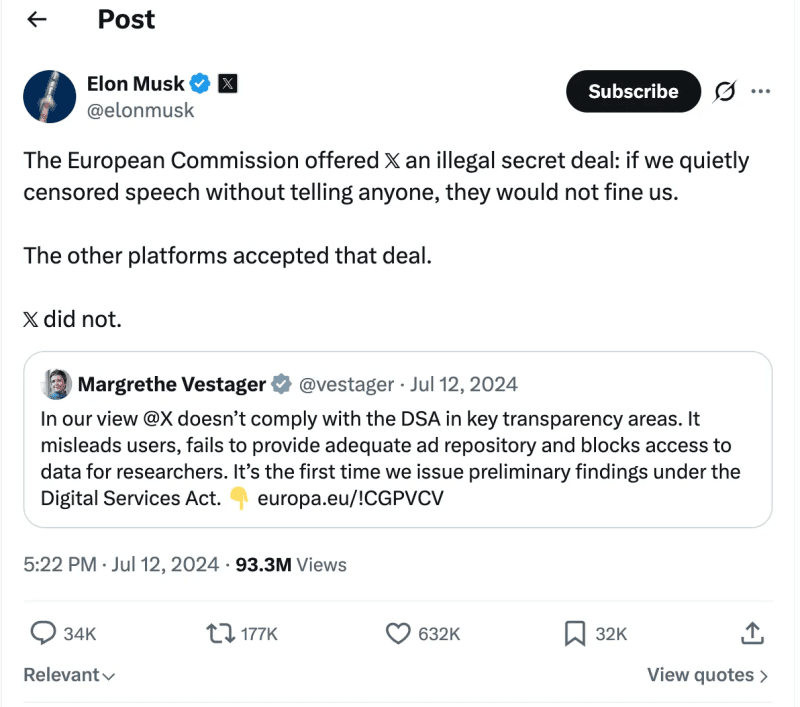

There is one social media giant that has conspicuously defied the EU Commission’s regulatory ambitions. In May 2023, X withdrew from the “voluntary” code of disinformation that was the precursor of DSA, and is apparently not instituting the sorts of moderation policies that the Commission has requested under the DSA regime. Consequently, the Commission has X in its crosshairs. It has imposed a hefty fine of €120 million upon X for non-compliance with the Act — though curiously, based on what appear to be somewhat rarefied offences such as failing to honour Twitter’s old Blue Check system, or storing information of public interest in the wrong format. This is the first fine imposed under the DSA.

Report Appears to Corroborate the Harms of DSA for Free Speech

I have only begun to scratch the surface of this report. But hopefully, the pattern is already clear enough: If this report is accurate — and if the communications it cites were properly authenticated — then it suggests that the DSA is empowering a privileged cadre of public officials to decide, through elaborate oversight mechanisms whose logic is impenetrable to the average citizen, what sorts of information and argumentation are fit for public consumption, and what sort must never reach the ears of citizens.

We did not actually need this report to realise that the “progressive” political elites of the European Union are erecting a complex and multi-layered censorship machine in plain sight. That much is already clear enough from the text of the Digital Services Act itself. However, up to now our knowledge of this regime was quite legalistic, based more on the logical ramifications of the wording of the DSA and the control mechanisms it creates, than on hard evidence of its application.

This report provides what may turn out to be quite damning documentary evidence of a range of mechanisms through which EU officials appear to have leveraged DSA to pressure Big Tech companies to restrict Europeans’ political speech in far-reaching ways, on pain of suffering major economic sanctions. Furthermore, due to the technical and financial challenges of fragmenting platform rules, these restrictions have, in practice, been frequently applied outside Europe as well.

Now that these allegations and accompanying evidence are out in the open, the EU Commission owes it to all of us to explain itself. So far, EU digital affairs spokesman Thomas Reigner has just said, “On the latest censorship allegations. Pure nonsense. Completely unfounded.” Is Reigner suggesting that a US House Judiciary Committee fabricated emails and notes of meetings between EU officials and digital platform managers out of whole cloth?

I await with great interest a more serious response from the European Commission.

Republished from the author’s Substack

Join the conversation:

Published under a Creative Commons Attribution 4.0 International License

For reprints, please set the canonical link back to the original Brownstone Institute Article and Author.