A study was published in the Canadian Medical Association Journal (CMAJ) titled “Impact of population mixing between vaccinated and unvaccinated subpopulations on infectious disease dynamics: implications for SARS-CoV-2 transmission,” on April 25, 2022. Set in the context of Covid-19 and based on a simulation model study of various mixes of unjabbed and jabbed populations, the study concluded that the unvaccinated pose a risk to the vaccinated.

This immediately made waves in the media in many parts of the world: WION News, The Hamilton Spectator, NDTV (India), DNA (India), Times Now (India), etc.

The above conclusion of the study goes against the layperson observation that highly jabbed populations have faced repeated surges: e.g. Israel, various countries in Europe, USA, etc., while populations with only a low percentage of people jabbed haven’t had surges: India, various African countries, etc. In fact in many places like Singapore, South Korea, Hong Kong, etc. even the first surge happened only after a high percentage of the population was jabbed. [Data references: Our World in Data].

The publication’s conclusion not only is against layperson observation, but also against other careful statistical studies. As early as Sep 2021, a study titled “Increases in COVID-19 are unrelated to levels of vaccination across 68 countries and 2947 counties in the United States” looked at statistical correlation between jab levels and reported Covid-19 cases, and in fact found a slight positive correlation: higher level of jabs was correlated positively with higher Covid-19 cases.

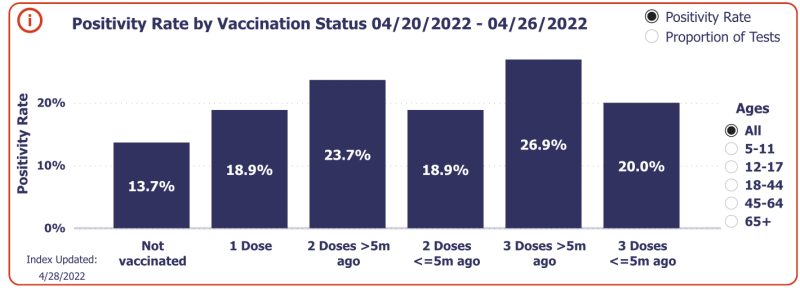

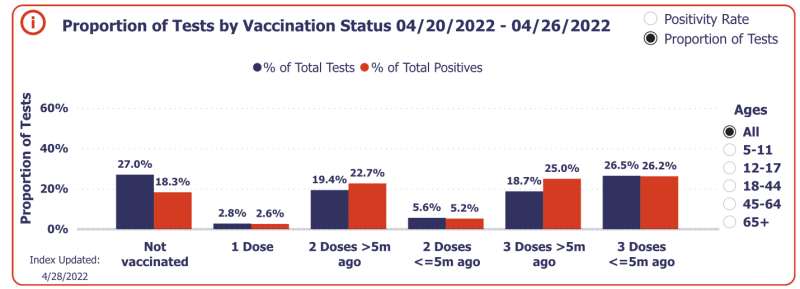

Post this statistical study, with the arrival of Omicron, further data from around the world has shown that infection rates are higher in vaccinated (even boosted) populations. For instance, the graph shows the test positivity rates for various levels of vaccination in the U.S. The unvaccinated have a higher percentage of tests but the lowest percent of positivity. It is clear that the vaccine does nothing to prevent infection after waning; in fact it could increase the chance of testing positive.

Despite all the above, how did the CMAJ study arrive at the conclusion it did? Let us now look at the technical merit of the study.

First, we note that it is a simulation study, not real-world data. In science, while simulations can be useful in many situations, real-world data has much more merit since no simulation can capture reality perfectly.

A closer look at the details of the simulation study reveals deep technical problems, listed below.

- The study says “We did not model waning immunity.” There is a preponderance of studies as well as real-world data showing waning immunity of the current Covid-19 jabs. The jab efficacy against symptomatic infection as well as hospitalization is known to be waning within 3-6 months. Therefore not modeling waning immunity is a clear mismatch with reality.

- The simulation has taken jab efficacy as 80% (Table-1 in the study). Now, this too is way far from reality. While a recently case-controlled study in England showed jab efficacy as low as -2.7% (minus 2.7%) after six months of double-jab, the above mentioned population-wide data from the U.S. shows a jab efficacy lower than -100% (minus 100%) for the triple-jabbed.

- The simulation takes the baseline immunity in the unjabbed as 20% (Table-1 in the study). This is yet another parameter quite far from reality in most places in the world now. In India, sero-surveys have shown that most people are now naturally exposed to the virus. Even in the U.S., the CDC has said that most Americans have been exposed to the virus. This is significant since various studies have affirmed that immunity after natural exposure is strong, long-lasting and far superior to jab-induced immunity.

Thus the much publicized CMAJ simulation study is based on assumptions which are known to be flawed. The conclusions may be true in an alternate world where immunity from natural exposure is poor, and Covid-19 vaccine have high efficacy which does not wane; but they certainly do not hold in the real world.

It is also worthwhile pointing to the statement of “competing interests” declared in the publication, which says that one of the authors has served on various advisory boards for Covid-19 vaccines. Whether this indicates competence or bias should be left to the reader to interpret, and responsible media journalists should also indicate such competing interests while reporting on publication results.

Join the conversation:

Published under a Creative Commons Attribution 4.0 International License

For reprints, please set the canonical link back to the original Brownstone Institute Article and Author.